Anti-scraping techniques | Web Scraping Tool | ScrapeStorm

Abstract:Anti-scraping techniques are technologies and methods used to protect websites and online data resources from automated scraping (usually scraping robots or scraping software). ScrapeStormFree Download

ScrapeStorm is a powerful, no-programming, easy-to-use artificial intelligence web scraping tool.

Introduction

Anti-scraping techniques are technologies and methods used to protect websites and online data resources from automated scraping (usually scraping robots or scraping software). The purpose of these mechanisms is to allow legitimate users of a website to access and use her website normally, while also limiting unauthorized data collection to protect privacy, data security, and network performance. or to prevent it.

Applicable Scene

Websites often want to protect their content and data to prevent illegal data collection and content theft. Anti-crawling mechanisms can prevent malicious scrapers from accessing your website data. In fact, almost any online activity that requires the protection of data and resources can potentially involve the use of anti-crawler mechanisms. They help maintain data integrity, protect privacy, reduce abuse, and ensure proper functioning of the network.

Pros: Anti-scraping mechanisms help websites protect their data, content, and resources from unauthorized crawling and misuse. By controlling and reducing scraping access, you can reduce server load and improve website performance and response speed. In competitive markets, anti-crawl mechanisms can also help reduce unfair practices by competitors, such as scraping pricing information or customer data.

Cons: Anti-scraping mechanisms may mistakenly identify normal users as malicious crawlers, restricting legitimate users and impacting the user experience. Some legitimate scraping, such as search engine scraping, can also be affected by anti-scraping mechanisms and require special handling.

Legend

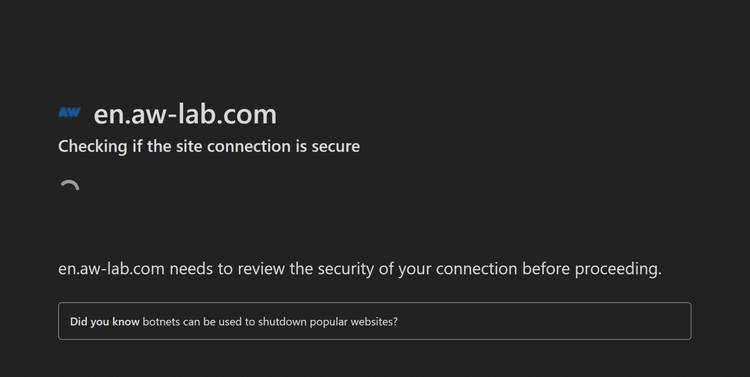

1. A JavaScript challenge.

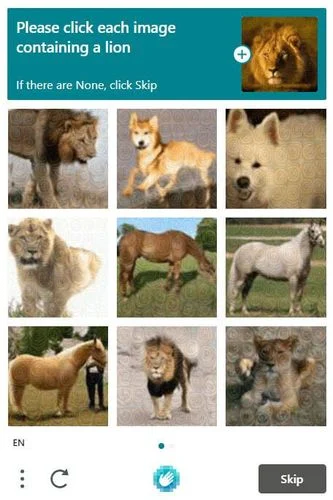

2. Captcha.

Related Article

Reference Link

https://www.zenrows.com/blog/anti-scraping#javascript-challenges

https://sm22896.medium.com/3-anti-scraping-techniques-you-may-encounter-70f7e5df3c03