Data extraction | Web Scraping Tool | ScrapeStorm

Abstract:Data extraction is the process of obtaining data from one or more data sources and importing it into a target database, data warehouse, or other storage device. ScrapeStormFree Download

ScrapeStorm is a powerful, no-programming, easy-to-use artificial intelligence web scraping tool.

Introduction

Data extraction is the process of obtaining data from one or more data sources and importing it into a target database, data warehouse, or other storage device. This process usually includes extract, transform, and load (ETL) steps, where extract refers to selecting the required data from the source system, transformation refers to cleaning, transforming and reconstructing the data, and loading refers to loading the data into the target system. process.

Applicable Scene

Data extraction is often used to integrate data from multiple sources into a single data store for analysis, reporting, and business decision-making.

Pros: Data extraction is essential to obtain and analyze the required information. Businesses and organizations can collect a variety of information such as market trends, competitive intelligence, and customer feedback. Accurate data extraction and analysis supports the decision-making process. Develop effective strategies by making data-driven decisions. The data extraction process can be automated, reducing human error. This increases the efficiency of your tasks.

Cons: Data varies in quality from source to source and may contain inaccurate information. Data quality control is an issue. Extracting and sharing data comes with privacy and security concerns. Care must be taken when handling personal information and confidential data. Data extraction is a costly and resource-intensive process. You need the infrastructure and skills to collect, store, manage, and analyze data.

Legend

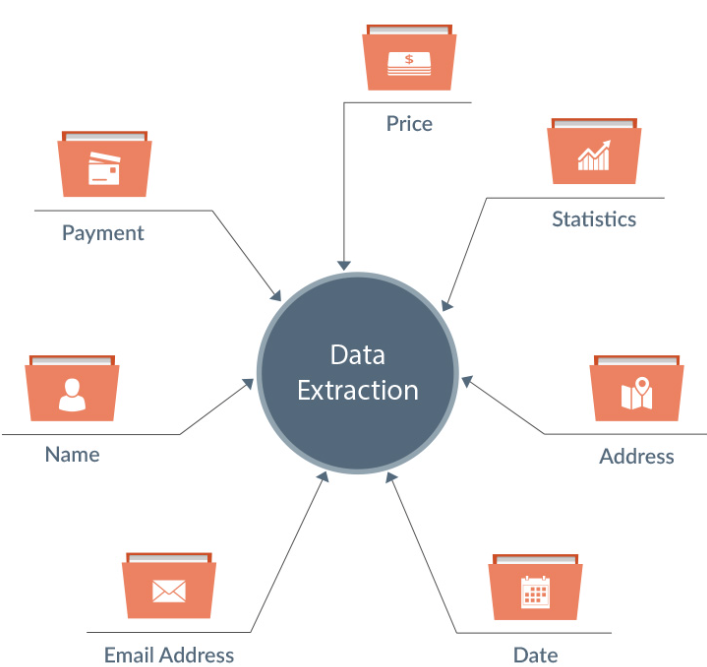

1. Data extraction.

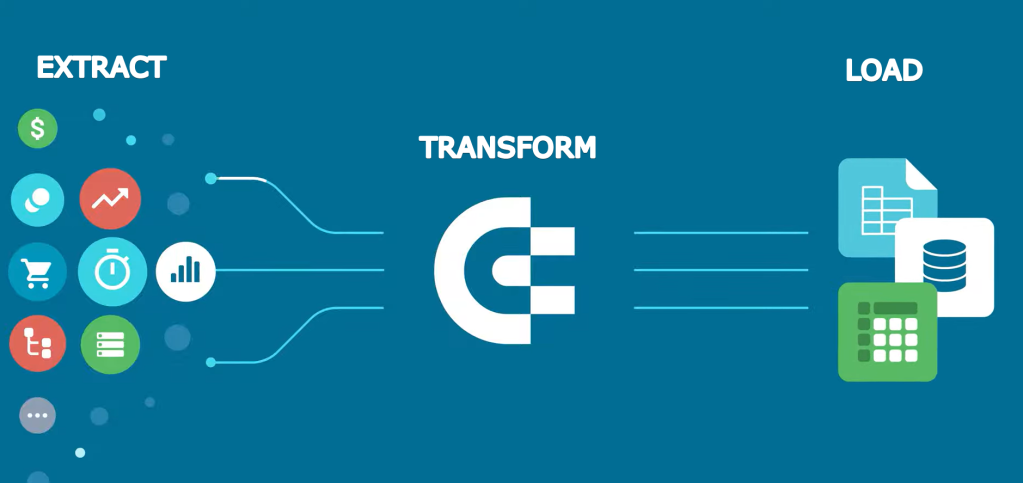

2. Data Extraction workflow.

Related Article

Reference Link

https://en.wikipedia.org/wiki/Data_extraction

https://www.talend.com/resources/data-extraction-defined/

https://www.reltio.com/glossary/data-integration/what-is-data-extraction/