Web crawler | Web Scraping Tool | ScrapeStorm

Abstract:Web crawler, also known as a web crawler or web spider, is an automated program or script designed to browse the Internet to collect information, data or perform specific tasks. ScrapeStormFree Download

ScrapeStorm is a powerful, no-programming, easy-to-use artificial intelligence web scraping tool.

Introduction

Web crawler, also known as a web crawler or web spider, is an automated program or script designed to browse the Internet to collect information, data or perform specific tasks. These tasks can include search engine indexing, data mining, price comparison, content scraping, automated testing, and more.

Applicable Scene

Web crawler is an automated tool widely used in many fields. It can be used to build search engine indexes, collect and mine data, perform monitoring and alerting, implement natural language processing, perform social media analysis, support e-commerce and price comparison, and be used for academic research, content aggregation, security applications, and IoT devices. Monitoring etc. These application scenarios can improve work efficiency and help make more accurate decisions.

Pros: Web crawlers provide users with an automated way to collect Internet data, helping to obtain information and support decision-making. It has the advantages of high efficiency, accuracy and large-scale application.

Cons: Web crawlers may have privacy and ethical issues, as well as possible website restrictions.

Legend

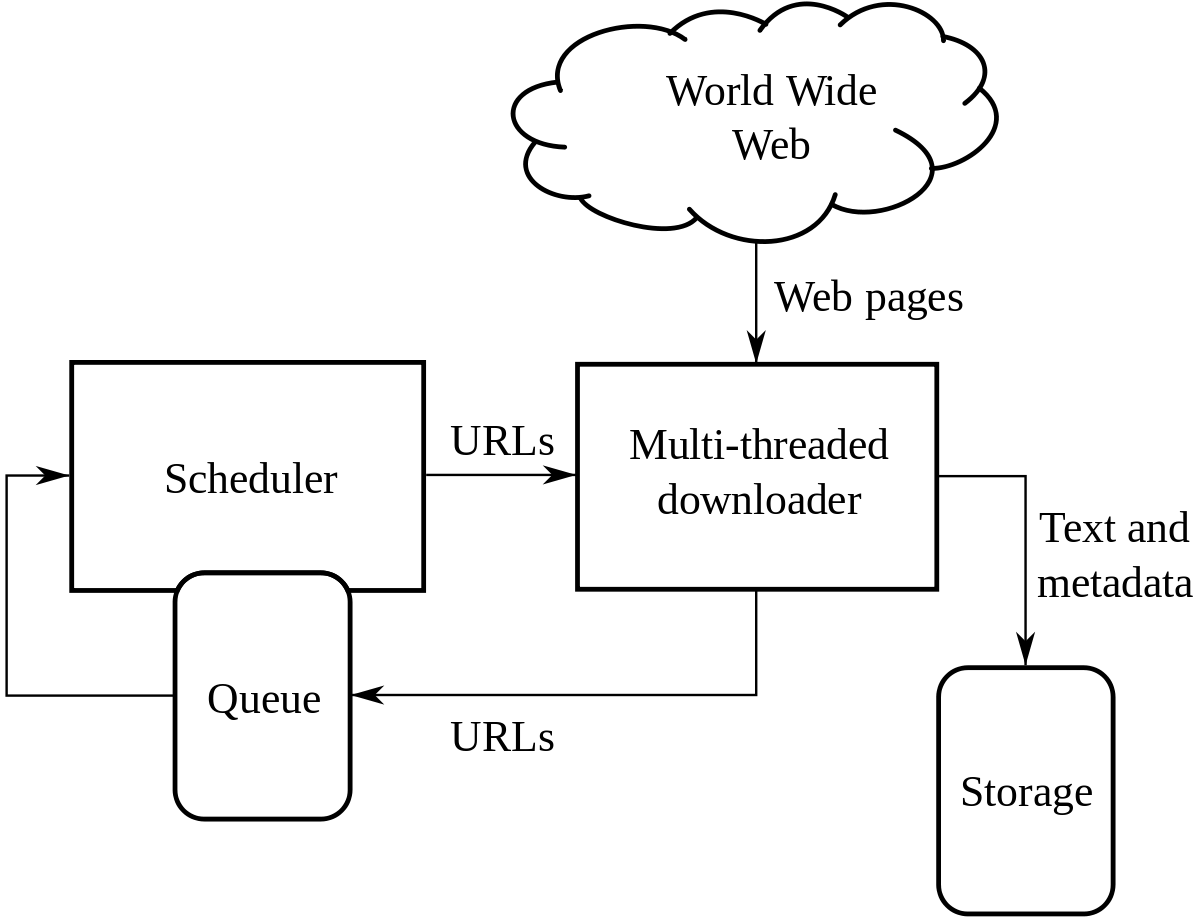

1. Architecture of a Web crawler.

2. The process of web crawler.

Related Article

Reference Link

https://en.wikipedia.org/wiki/Web_crawler

https://www.cloudflare.com/learning/bots/what-is-a-web-crawler/