Data collection | Web Scraping Tool | ScrapeStorm

Abstract:Data collection refers to the process of collecting and retrieving data from a variety of data sources or devices, including sensors, equipment, computer systems, networks, mobile devices, etc. ScrapeStormFree Download

ScrapeStorm is a powerful, no-programming, easy-to-use artificial intelligence web scraping tool.

Introduction

Data collection refers to the process of collecting and retrieving data from a variety of data sources or devices, including sensors, equipment, computer systems, networks, mobile devices, etc.

Applicable Scene

The purpose of data collection is to summarize, store, and process scattered data for subsequent analysis, reporting, decision-making, and other purposes.

Pros: Data collection helps organizations and businesses obtain valuable information and insights from various sources to support various decisions and business objectives. It enables real-time monitoring and control, increasing efficiency, safety, and reliability. Additionally, data collection also supports data analysis, forecasting, and optimization, allowing organizations to better understand customer needs, market trends, and business performance.

Cons: Data collection can involve significant costs, including hardware, software, network, and human resources. Additionally, the collected data may contain noise and inaccurate information and must be cleaned and processed. Data privacy and security are also important issues, as data collection may involve the transmission and storage of sensitive information and requires appropriate security measures. Also, if large amounts of data are not carefully managed, it can lead to data leaks and the accumulation of unnecessary data.

Legend

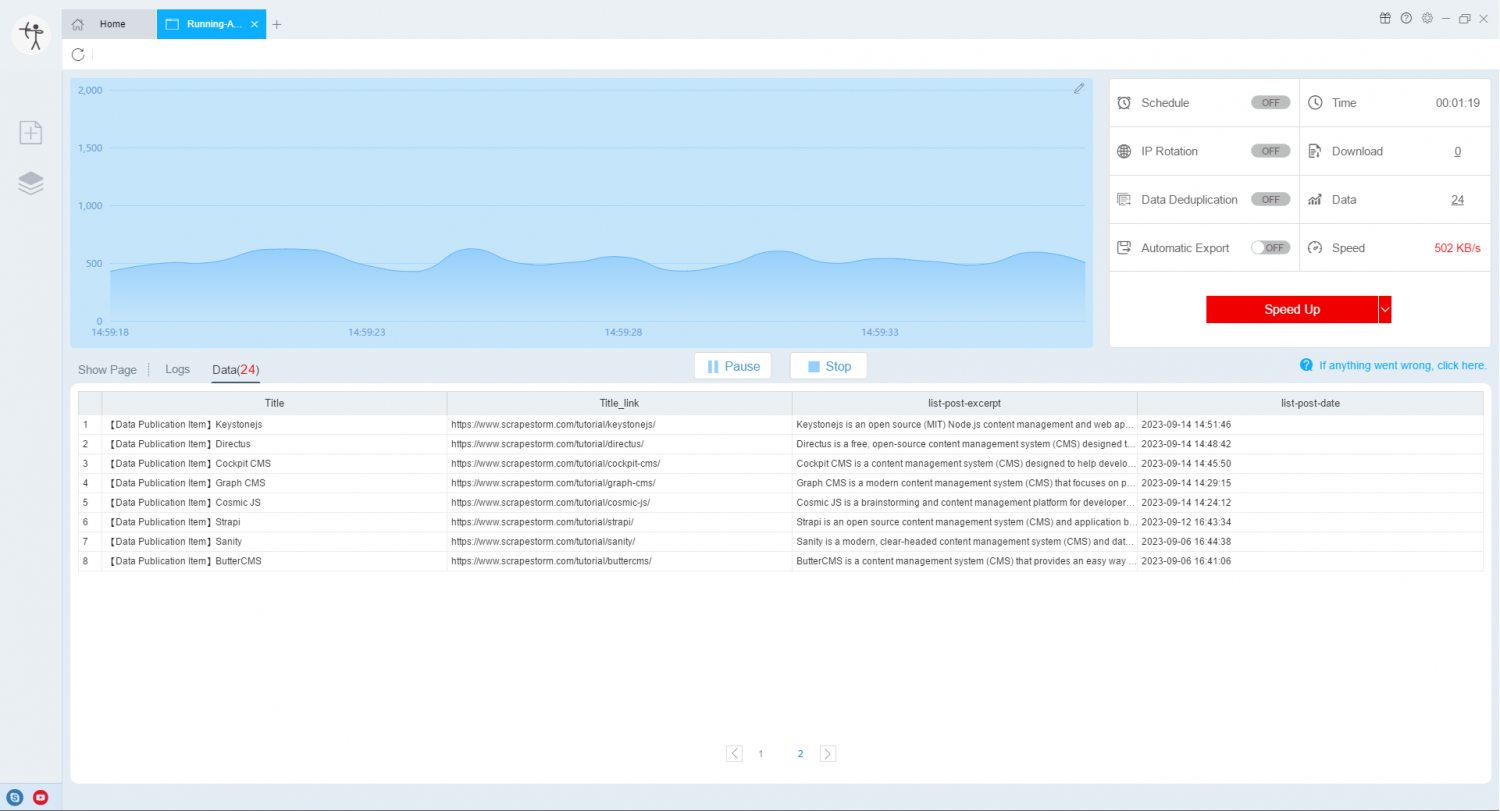

1. Extract data using ScrapeStorm.

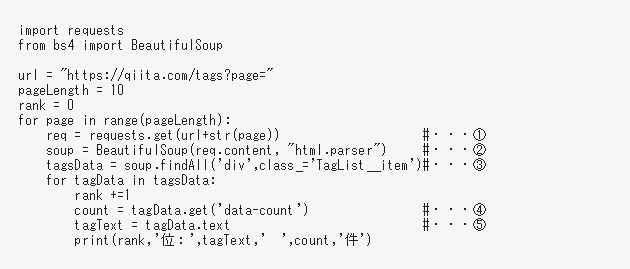

2. Extract data using Python.

Related Article

Reference Link

https://en.wikipedia.org/wiki/Data_collection

https://emeritus.org/blog/data-analytics-what-data-collection/